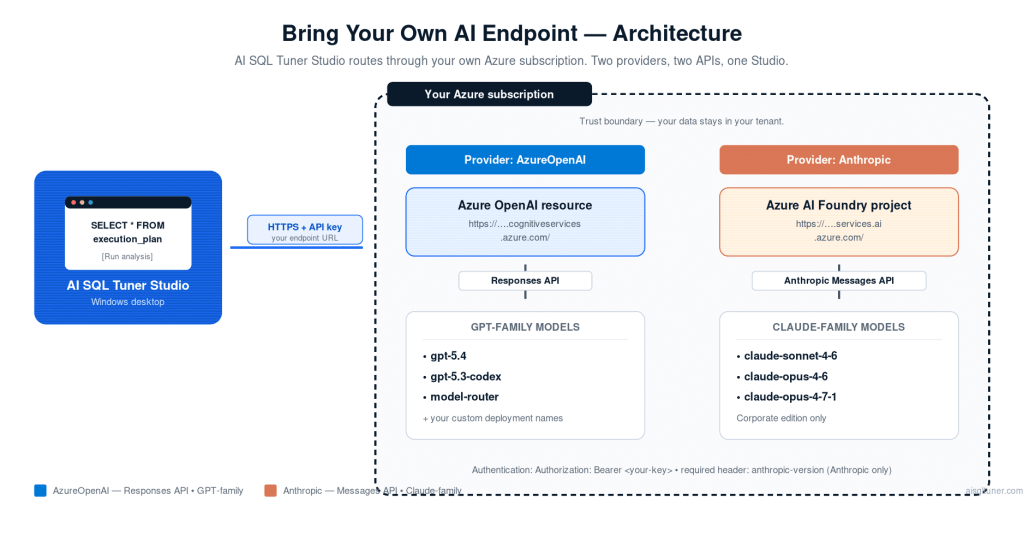

This guide explains how to configure AI SQL Tuner Studio to use your own Microsoft Foundry (Azure AI Foundry) or Azure OpenAI setup, including support for:

- GPT / Azure OpenAI deployments using the Responses API

- Anthropic Claude deployments hosted on Azure AI Foundry using the Anthropic Messages API

AI SQL Tuner Studio supports two providers in the UI:

- AzureOpenAI — for GPT-family deployments accessed through the Azure OpenAI Responses API

- Anthropic — for Claude-family deployments hosted on Azure AI Foundry and accessed through the native Anthropic Messages API

Table of Contents

1) Prerequisites

- A Microsoft Foundry resource or Azure OpenAI resource in your Azure subscription

- A deployed model you want AI SQL Tuner Studio to use

- Your endpoint and API key

- Any edition of AI SQL Tuner Studio. See compare AI SQL Tuner Studio Editions for details.

Depending on provider:

- AzureOpenAI — a GPT deployment name such as

gpt-5.4,gpt-5.3-codex, or your own custom deployment name. - Anthropic — a Claude model/deployment name such as

claude-sonnet-4-6,claude-sonnet-4-5,claude-opus-4-6, orclaude-opus-4-7-1depending on your license and deployment availability.

1.1) Create a Microsoft Foundry resource (new Foundry portal)

Microsoft Foundry (formerly Azure AI Foundry) is the portal experience you use to create Foundry resources/projects and work with models and agents. Learn more about Azure AI Foundry.

- Open Microsoft Foundry: https://ai.azure.com/

- Ensure you’re using the new Foundry portal (there’s a banner toggle in the portal to switch between (new) and (classic)).

- Create a Foundry resource and a Foundry project (or select an existing project).

- In your project, locate the endpoint details you’ll use with AI SQL Tuner Studio:

- Endpoint URL

- API key

Notes:

- Use the root endpoint only. Do not append

/openai,/models,/anthropic/v1/messages, or other path segments. - AI SQL Tuner Studio expects the endpoint to end with

/. - If you don’t see the resource/project you expect in the new portal, use View all resources to open the classic experience.

1.1.1) Alternative: Provision an Azure OpenAI resource (Azure Portal)

- In the Azure Portal, search for Azure OpenAI and choose Create.

- Select your Subscription, Resource group, Region (must be supported for Azure OpenAI in your tenant), and Name (this becomes part of the endpoint host).

- Create the resource.

- After creation, open the Azure OpenAI resource:

- Copy the Endpoint (looks like

https://<resource>.cognitiveservices.azure.com/). - Go to Keys and Endpoint and copy Key 1 or Key 2.

- Copy the Endpoint (looks like

Notes:

- The endpoint must be the resource endpoint (not a portal URL).

- Use the resource root, for example

https://your-resource.cognitiveservices.azure.com/. - Do not append

/openai/responses,/chat/completions, or any other route. - AI SQL Tuner Studio expects the endpoint to end with

/.

1.2) Deploy a model (create a deployment)

For AzureOpenAI, AI SQL Tuner Studio uses a deployment name when calling the Azure OpenAI Responses API.

For Anthropic, AI SQL Tuner Studio sends the configured Claude model/deployment name to the Anthropic Messages API hosted behind Azure AI Foundry.

- Open Microsoft Foundry: https://ai.azure.com/

- Select your Foundry project.

- Go to your project’s models/deployments area.

- Select Create deployment.

- Choose the model you want to use (depends on what’s available in your region/tenant).

- Give it a Deployment name or note the deployed model name. Examples:

- GPT:

gpt-5.4,gpt-5.3-codex,model-router - Claude:

claude-sonnet-4-6,claude-sonnet-4-5,claude-opus-4-7-1

- GPT:

- Create/save the deployment.

You will use this value in the Primary model field in the Studio UI.

2) Configure your AI endpoint in AI SQL Tuner Studio

- Launch AI SQL Tuner Studio.

- In the left panel, expand the AI Configuration section.

- Choose the AI provider:

- AzureOpenAI for GPT-family Azure OpenAI deployments.

- Anthropic for Claude-family Azure AI Foundry deployments.

- Fill in the following fields:

- AZURE_OPENAI_ENDPOINT — paste your Azure OpenAI endpoint URL (e.g.,

https://your-resource.cognitiveservices.azure.com/). - AZURE_OPENAI_KEY — paste your API key.

- Primary model — enter your deployment/model name (for example

gpt-5.4,model-router, orclaude-sonnet-4-6). - Secondary model (optional) — enter a fallback deployment name for rate-limiting or large-request scenarios.

- AZURE_OPENAI_ENDPOINT — paste your Azure OpenAI endpoint URL (e.g.,

- Check Save key if you want the API key persisted locally for future sessions.

- Click Save AI settings to store your configuration.

Your settings are saved to %LOCALAPPDATA%\AI SQL Tuner Studio\settings.json. The API key is stored only if you explicitly opt in via the Save key checkbox.

2.1) Provider-specific behavior

AzureOpenAI provider

- Uses the Azure OpenAI Responses API.

- Uses the configured endpoint exactly as entered, for example

https://your-resource.cognitiveservices.azure.com/. - Expects GPT-family deployments that are compatible with the Responses API.

Anthropic provider

- Uses the native Anthropic Messages API on Azure AI Foundry.

- Starts from the same endpoint field you enter in the UI, then internally converts:

https://your-resource.cognitiveservices.azure.com/- to

https://your-resource.services.ai.azure.com/

- Calls

POST /anthropic/v1/messages. - Uses Authorization: Bearer <key> and the required

anthropic-versionheader. - You should still enter the root endpoint in the UI; the app constructs the Anthropic route for you.

2.2) How the Studio selects models

The Studio automatically selects models based on request size:

- Requests within the primary character limit use the primary model.

- Larger requests can use the secondary model (if configured).

- Requests above the supported maximum are blocked with a prompt to reduce the request size.

In addition:

- If the primary deployment is rate-limited or at capacity, the app can retry with the secondary model.

- The actual model used is detected and shown in the console/log output when available.

Tip: The app automatically detects the actual model used when deployments route to different model versions. The model in use is shown in the console output during execution.

2.3) Running an analysis with your endpoint

- Configure a SQL Server connection in the Connections section.

- Select a Tuning Goal (Server Health Check, Fix Deadlocks, Code Review, Index Tuning, Query Tuner or Blocking and Locking Analysis).

- Click Run.

- Monitor progress in the Console panel; the HTML report appears in the Report panel when complete.

3) API behavior by provider

3.1) AzureOpenAI: Responses API

AI SQL Tuner Studio uses the Azure OpenAI Responses API for GPT-family models instead of older chat-completions-style integration.

What this means for you:

- Use a deployment that supports the Responses API.

- Enter the deployment name in Primary model / Secondary model.

- Do not paste a REST route such as

/openai/responsesinto the endpoint field.

3.2) Anthropic Claude: Messages API via Azure AI Foundry

Claude deployments on Azure AI Foundry are not called through the Azure AI Inference ChatCompletionsClient in this app. Instead, AI SQL Tuner Studio calls the native Anthropic Messages API using your configured endpoint and key.

What this means for you:

- Keep the endpoint field set to the root resource URL.

- Do not append

/modelsor/anthropic/v1/messages. - Enter the Claude deployment/model name in Primary model / Secondary model.

4) How AI SQL Tuner Studio resolves configuration

Configuration is resolved in the following priority order (highest to lowest):

- Studio UI settings — values entered in the AI Configuration section and saved via Save AI settings.

- Built-in defaults — default deployment names and endpoint provided by AI SQL Tuner LLC.

If no custom endpoint or key is provided, the app uses the default Azure OpenAI endpoint hosted in AI SQL Tuner LLC’s Azure subscription (no business data is transmitted — only SQL Server system metadata).

Default built-in models currently include GPT and Claude defaults, but UI settings override them.

5) Common configuration problems

“Azure OpenAI endpoint or API key is not configured or is empty”

- In the Studio UI, expand AI Configuration and verify the endpoint and key fields are filled in.

- Ensure the endpoint ends with

/. - If using the Save key option, click Save AI settings to persist.

Endpoint looks correct but streaming fails

- Verify the endpoint is the resource root endpoint (not a portal URL).

- Do not append

/openai,/models, or/anthropic/v1/messages. - Ensure the configured model/deployment exists under that resource.

- Try a different deployment/model name in the Primary model field.

AzureOpenAI deployment works elsewhere but fails here

- Confirm the deployment supports the Responses API.

- Ensure you entered the deployment name, not the raw model family name unless your deployment uses that exact name.

Anthropic deployment returns unsupported API or path errors

- Select Anthropic as the provider in AI SQL Tuner Studio.

- Use the root Azure endpoint such as

https://your-resource.cognitiveservices.azure.com/. - Do not enter

/anthropic/v1/messagesmanually. - Ensure the configured Claude model/deployment name matches what is available in your Foundry project.

“Using model/deployment: …” is not what you expected

- Set the Primary model field explicitly in the AI Configuration section.

- Confirm the configured deployment/model name matches exactly.

- Verify the selected provider matches the model family (GPT vs Claude).

Request size exceeds limit

- The Studio will display a dialog if the request is too large.

- Configure a Secondary model with a larger context window, or reduce the scope of the analysis (e.g., fewer objects for Code Review).

6) Quick checklist

- Provider selected correctly: AzureOpenAI for GPT or Anthropic for Claude

- Endpoint set to the resource root such as

https://...cognitiveservices.azure.com/ - API key entered

- Primary model / deployment name set

- Model/deployment exists in Azure OpenAI or Azure AI Foundry

- Clicked Save AI settings

- Run an analysis and verify the console shows your deployment name

7) Examples

7.1) Use a different GPT deployment

- Open the AI Configuration section in the left panel.

- Set provider to AzureOpenAI.

- Set Primary model to

gpt-5.4,gpt-5.3-codex, or any deployment name you created. (See GPT-5.4 support added in 1.0.26 to learn more about OpenAI GPT 5.4 benefits.) - Click Save AI settings.

- Select a connection and tuning goal, then click Run.

To use a model-router deployment, enter model-router in the Primary model field and click Save AI settings.

7.2) Use Claude on Azure AI Foundry

You can also use Anthropic models such as Claude Sonnet 4.6 and Opus 4.6 or 4.7.

- Open the AI Configuration section.

- Set provider to Anthropic.

- Set AZURE_OPENAI_ENDPOINT to your resource root, for example

https://your-resource.cognitiveservices.azure.com/. - Set AZURE_OPENAI_KEY to your Azure AI Foundry-compatible key.

- Set Primary model to a Claude deployment/model name such as

claude-sonnet-4-6. - Optionally set Secondary model to another Claude deployment such as

claude-sonnet-4-5. - Click Save AI settings and run an analysis.

If you need support, please include:

- The selected provider (AzureOpenAI or Anthropic).

- The deployment/model name you used (shown in the console output).

- The exact error message.

Frequently Asked Questions

What is an AI endpoint and why do I need one?

An AI endpoint is a URL that connects AI SQL Tuner Studio to an AI model — either Azure OpenAI for GPT-family models, or Azure AI Foundry for Anthropic Claude models. By configuring your own endpoint, you keep full control over data residency, billing, and which model version the Studio uses for query analysis. This is ideal for organizations with strict compliance, network, or data-locality requirements.

Which AI models can I use with AI SQL Tuner Studio?

AI SQL Tuner Studio supports two providers. Through the AzureOpenAI provider you can use any GPT-family model deployed on Azure OpenAI that supports the Responses API — common choices include gpt-5.4, gpt-5.3-codex, and model-router. Through the Anthropic provider you can use Claude models hosted on Azure AI Foundry, including claude-sonnet-4-6, claude-sonnet-4-5, claude-opus-4-6, and claude-opus-4-7-1. Check the deployment in your Foundry project or Azure portal to confirm the exact name.

How do I find my Azure OpenAI or Foundry endpoint URL?

For an Azure OpenAI resource, open the resource in the Azure Portal, navigate to Keys and Endpoint, and copy the URL — it follows the format https://<your-resource>.cognitiveservices.azure.com/. For a Microsoft Foundry project, open https://ai.azure.com/, select your project, and locate the endpoint details. Always use the root endpoint (ending with /) — do not append /openai, /models, or /anthropic/v1/messages.

What should I do if my AI endpoint connection fails?

Start by confirming the provider selection (AzureOpenAI vs Anthropic) matches the model family of your deployment. Then verify the endpoint URL is the resource root (ending with /), the API key is current, and the deployment/model name matches exactly what you created in Azure. Section 5 of this guide lists the most common errors with specific resolutions. The Studio’s console output shows the exact error returned by the API to help you diagnose.

Is my data secure when using a custom AI endpoint?

Yes. When you configure your own endpoint, all API calls go directly from AI SQL Tuner Studio to your Azure resource — your query data never passes through third-party servers. You can further restrict access using Azure Private Endpoints, network security groups, and role-based access control. The Studio transmits only SQL Server system metadata and the code being analyzed; it does not send business data from tables.

What’s the difference between using Azure OpenAI and Anthropic in AI SQL Tuner Studio?

The AzureOpenAI provider uses GPT-family models (GPT-5.4, GPT-5.3-codex, model-router) called through the Azure OpenAI Responses API. The Anthropic provider uses Claude models (Claude Sonnet 4.6, Claude Opus 4.6, Claude Opus 4.7) called through the native Anthropic Messages API on Azure AI Foundry. Both run inside the Azure trust boundary. Choose based on which model family your team prefers, which deployments are available in your tenant, and which model excels at the kind of tuning analysis you do most.

Why does AI SQL Tuner need the root endpoint instead of /anthropic/v1/messages?

AI SQL Tuner Studio constructs the full API route internally. For the Anthropic provider, it transforms your root endpoint (https://your-resource.cognitiveservices.azure.com/) into the Foundry inference host (https://your-resource.services.ai.azure.com/) and appends /anthropic/v1/messages with the required Authorization: Bearer <key> and anthropic-version headers. Pasting the full route into the endpoint field would cause the URL construction to break.